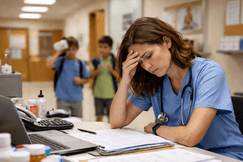

AI Told a Nurse to Load a Dialysis Patient with Fluids. He Caught It Just in Time.

- A hospital adopted a sepsis protocol that flagged a patient for IV fluids.

- The only problem? A real-life nurse's assessment revealed the patient had a dialysis catheter.

- The charge nurse pressed the nurse to follow the AI-driven protocol despite his concerns.

“We have five senses, and computers only get input," one nurse described, among growing concern against AI protocols.

Adam Hart faced a critical decision. An elderly woman arrived at St. Rose Dominican Hospital in Henderson, Nevada, with dangerously low blood pressure. The hospital's AI system flagged her for sepsis, a life-threatening condition. Protocol dictated immediate IV fluids, but Hart, a nurse with 14 years of experience, noticed a dialysis catheter below her collarbone during his physical examination.

Flooding her with fluids risked pulmonary edema. When Hart raised his concern, the charge nurse insisted on following the AI alert. Hart refused, a physician intervened with dopamine instead, and the hospital later emphasized that its AI tools are meant to support—not supersede—clinician judgment.

The patient avoided a fatal complication, but the incident highlighted a growing issue in healthcare: AI-driven protocols—routed through institutional policies—that clash with the clinical judgment of real-life nurses.

AI Implementation Has Been Plagued with Challenges

This tension between algorithmic certainty and clinical expertise has become an ongoing challenge within the nursing industry.

Predictive models, from sepsis alerts to automated monitoring, are now routine in hospitals. While these tools promise to ease the burden on an overworked, understaffed workforce, many nurses find they create more problems than they solve.

At UC Davis Health, for example, BioButton devices were introduced in 2023 to monitor oncology bone marrow transplant patients. The small sensors tracked vital signs and issued alerts, but nurses like Melissa Beebe found the alerts vague and unhelpful.

"It was overdoing it but not really giving great information," Beebe told Scientific American. Nurses were often pulled away from patients they had already identified as concerning.

After a year, UC Davis discontinued the technology, acknowledging that nurses identified deteriorating patients faster than the AI.

Or, consider a Washington healthcare system that invested in very expensive robots to "ease" the burden of nurses and other staff by transporting lab samples and other critical supplies. After only two years, the hospital "fired" the robots, because they got in the nurses' way and basically got stuck in elevators trying to navigate different floors.

More Than the Machine

The limitations of AI in healthcare are not just technical but philosophical. Elven Mitchell, an intensive care nurse of 13 years now at Kaiser Permanente Hospital in Modesto, Calif., pointed out that even the most advanced AI algorithm can never see a patient to assess them as a nurse can.

“I can’t tell you how many times I have that feeling, I don’t feel right about this patient. It could be just the way their skin looks or feels to me," he explained. “Sometimes you can see a patient and, just looking at them, not doing well. It doesn’t show in the labs, and it doesn’t show on the monitor."

“We have five senses, and computers only get input," Mitchell added.

Electronic medical records capture lab results and imaging, but miss critical information like how a patient walks or answers questions. Experienced clinicians rely on subtle visual and tactile cues that algorithms cannot replicate.

"The models will never have access to all of the data that the provider has," explains Ziad Obermeyer, a health policy professor at UC Berkeley.

This isn't technophobia. Nurses welcome validated tools that improve care but mistrust systems that fail to deliver on their promises.

Epic's sepsis-prediction algorithm, for instance, was widely adopted before evaluations revealed it was less accurate than advertised. Nurses bore the brunt of managing false positives and institutional policies based on flawed technology.

Mitchell estimates that half the alerts from his hospital's monitoring system are false positives, pulling nurses away from high-risk patients.

"Maybe in 50 years it will be more beneficial, but as it stands, it is a trying-to-make-it-work system," he says.

Bringing Nurses to Solve the AI Problem

Some institutions are addressing these challenges by involving clinicians, including nurses, in AI development. Stanford Health Care and Mount Sinai Health System have brought AI development in-house, focusing on adoption and trust.

Nigam Shah, Stanford's chief data scientist, emphasizes including nurses in the process. "Ask nurses first, doctors second, and if the doctor and nurse disagree, believe the nurse," he advises.

At Mount Sinai, a wound-care nurse proposed an AI tool to predict bedsores, which saw high adoption rates due to her involvement in training peers.

One avenue some hospitals are pursuing is encouraging nurses to develop AI solutions that are actually useful, but the approach is not as widespread as nurses would like.

Other institutions continue to push for more autonomous systems that can reduce the need for nurse time on certain tasks. For instance, Mount Sinai introduced Sofiya, a soft-spoken AI bot advertised as a female in scrubs that calls cardiac catheterization patients to provide instructions before the procedure.

While hospital leadership claims Sofiya saved over 200 nursing hours in five months, nurses like Denash Forbes argue its work still requires verification, questioning the efficiency gains.

Similarly, AI-powered clinical documentation tools have underdelivered, with time savings far less than vendors promised.

"Everybody rushed in saying these things are magical; they're gonna save us hours. Those savings did not materialize," Shah told Scientific American.

Nurses Address AI Concerns

The frustration over the healthcare industry's emphasis on AI while real-life nurses are already struggling is growing.

In November, New York State Nurses Association members protested outside City Hall, criticizing investments in AI while units remain understaffed.

A 2024 nursing survey confirmed that burnout, staffing shortages, and workplace violence are driving nurses away, pressures that AI has yet to alleviate meaningfully.

The path forward lies in recognizing that clinical judgment, shaped by training and experience, cannot be automated. Algorithms can augment this judgment but must explain their reasoning, identify specific triggers, and integrate seamlessly into workflows. Most importantly, implementation must involve the nurses who use these tools, as their proximity to patients provides insights no algorithm can replicate.

Adam Hart's decision in the emergency department exemplifies the irreplaceable value of clinical expertise.

He saw what the algorithm couldn't: a patient whose unique circumstances required deviation from protocol.

In the age of AI, the ability to override a prompt when clinical reality demands it may be nursing's most vital contribution to patient safety.

🤔Nurses, what do you think about AI in healthcare? Share your thoughts below.

If you have a nursing news story that deserves to be heard, we want to amplify it to our massive community of millions of nurses! Get your story in front of Nurse.org Editors now - click here to fill out our quick submission form today!