AI Charting Tools Show Racial Bias and Make Errors—Nurses Are Still Liable, Study Warns

- AI scribes used by roughly 30% of physician practices are producing hallucinations, including fabricated diagnoses and exams that never happened, with significantly higher error rates for Black patients.

- The rapid adoption of AI documentation tools has outpaced FDA regulation, as these tools are classified as administrative rather than medical devices, leaving nurses legally responsible for verifying and co-signing AI-generated notes that may contain dangerous inaccuracies.

- Researchers from Columbia University School of Nursing are calling for mandatory vendor transparency, independent validation standards, and clear regulatory frameworks that include nurses in governance before further deployment.

A major study published in NPJ Digital Medicine is sounding the alarm on AI scribes in healthcare, warning that the rush to deploy these documentation tools is outpacing validation and oversight. Led by researchers from Columbia University School of Nursing, the analysis found that while roughly 30% of physician practices now use AI scribes, the technology comes with hallucinations, racial bias, and dangerous documentation gaps that no regulator is currently policing.

For nurses, the stakes could not be higher. AI-generated clinical notes require mandatory nurse review, verification, and co-signature before they become part of the legal medical record. That means nurses carry full professional and legal responsibility for documentation they didn't write, produced by tools that can fabricate diagnoses, invent physical exams, and systematically underperform for Black patients.

The findings come as ambient AI listening tools expand rapidly into nursing workflows, promising to cut charting time by 20-40%. But as the study's authors put it, the central question is no longer whether to adopt these tools, but "how to do so responsibly."

What the Research Found

The study, authored by Maxim Topaz, Ph.D., of Columbia Nursing, Laura Maria Peltonen of the University of Eastern Finland, and Zhihong Zhang, Ph.D., of Columbia University, examined the growing body of evidence on AI scribe performance.

The scale of adoption is staggering: one large health system reported 7,000 physicians using AI scribes across 2.5 million patient encounters in just 14 months.

While modern AI scribes report error rates of 1-3%, seemingly low compared to the 7-11% error rates of older automated speech recognition systems, the types of errors are alarming. Researchers identified four critical failure modes:

- AI hallucinations that fabricate physical exams and diagnoses

- Omissions of essential symptoms and assessment findings

- Misinterpretations of treatments

- Speaker attribution errors when multiple people are in the room

Perhaps most troubling, the study cited research showing that approximately 50% of patient problems discussed verbally during clinical encounters were never documented in electronic health records, and 21% of interventions discussed were never recorded. The promise of AI to close documentation gaps appears far from fulfilled.

>>Listen to The Latest Nurse News Podcast

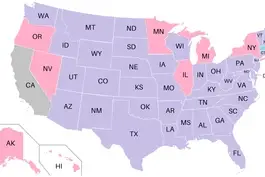

Racial Bias and the Regulatory Gap

One of the study's most concerning findings involves systematic racial disparities in AI scribe performance.

- Speech recognition systems underlying these tools show significantly higher error rates for African American patients and speakers with non-standard accents.

- Patients with limited English proficiency or from marginalized communities may receive inadequate documentation of their concerns, potentially leading to missed clinical information that affects their care.

A separate PBS investigation into OpenAI's Whisper, an AI transcription tool used in medical settings, found even more disturbing results.

The tool was documented fabricating:

- Racial commentary

- Violent rhetoric

- Incorrect medical terminology.

In one case, the audio "after she got the telephone, he began to pray" was transcribed as "he would help me get my shirt, kill me. And I was he began to pray."

Making matters worse, these tools currently face no FDA regulation. Because AI scribes are classified as administrative tools rather than medical devices, they bypass federal oversight entirely. The researchers argue this regulatory gap leaves both patients and clinicians exposed, particularly as clinical decisions increasingly rely on AI-generated documentation.

What Nurses Need to Know

This story matters to every nurse working in a facility that uses or plans to adopt AI documentation tools. Because nurses are legally required to review, verify, and co-sign AI-generated notes, they are the last line of defense against errors reaching the permanent medical record. A hallucinated diagnosis or omitted symptom that slips through could create liability issues and, more importantly, compromise patient care.

The study's authors recommend that healthcare organizations implement rigorous independent validation of AI scribe tools before deployment, mandate vendor transparency about error rates and limitations, and establish clear protocols for nurse training and quality assurance. They also call for multi-level governance structures that include nurses, not just physicians and administrators, in decisions about how these tools are implemented.

Nurses should also be aware that early research on tools like Epic's new AI assistants and Microsoft's Dragon Copilot shows real-time savings, but 29% of nurses in one survey reported that AI-generated notes did not allow editing, leading to inaccurate or incomplete charting. If your facility is rolling out AI scribes, advocate for your seat at the table in the implementation process and never co-sign a note you haven't thoroughly reviewed.

🤔 Has your facility started using AI scribes or ambient listening tools? Do you trust them to document your patient interactions accurately, or do you find yourself spending just as much time reviewing AI-generated notes? Share your experience in the comments!

If you have a nursing news story that deserves to be heard, we want to amplify it to our massive community of millions of nurses! Get your story in front of Nurse.org Editors now - click here to fill out our quick submission form today!