Nurses Are Being Asked to Use AI Tools They Don't Trust & Were Never Trained On (Survey)

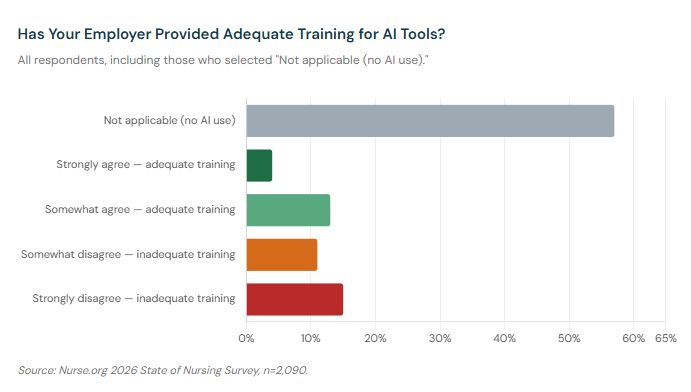

- Only 25% of nurses are currently using AI tools at work, and among those who are, 60% say their employer has not provided adequate training for the tools they're expected to use.

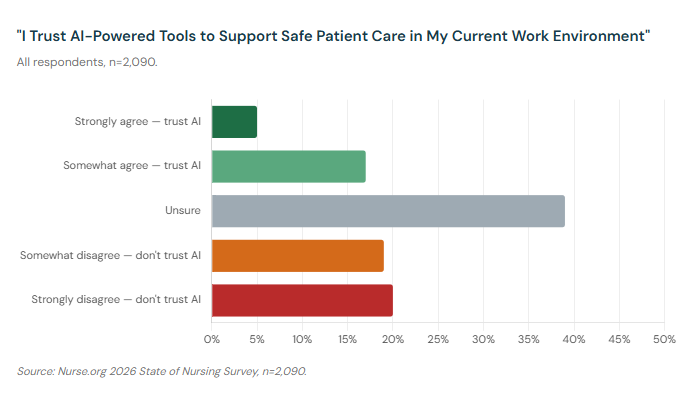

- Just 22% of nurses trust AI-powered tools to support safe patient care. Another 39% say they're simply unsure — a figure that reflects confusion and a lack of preparation, not acceptance.

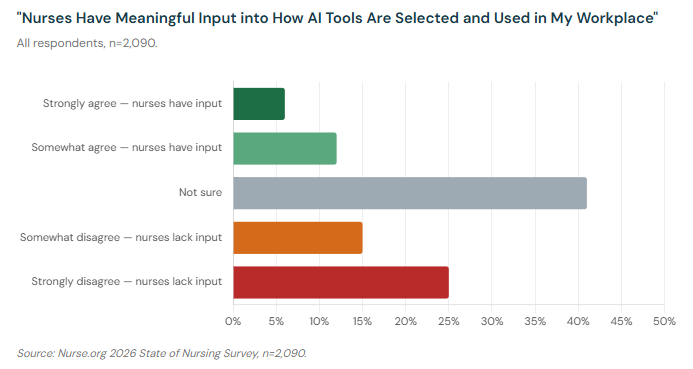

- 40% of nurses say they have no meaningful input into how AI tools are selected and deployed in their workplace, and 41% aren't sure. Only 19% believe nurses have a real seat at the table.

Healthcare institutions are moving fast on artificial intelligence. AI-powered documentation tools, clinical decision support systems, scheduling algorithms, and patient monitoring platforms are being deployed across hospitals and health systems at a pace that is outrunning the ability of nurses to understand, evaluate, or influence them.

Our 2026 State of Nursing Survey asked nurses directly about their experience with AI at work for the first time — how much they're using it, whether they trust it, whether they were trained on it, and whether they had any say in which tools their employer chose. What the data describes is a technology rollout that is happening largely around nurses rather than with them.

The Numbers at a Glance

Nurses and AI: 2026 Survey Results:

- 25% of nurses have personally used AI tools at work in the past 30 days

- 60% of nurses with AI exposure say their employer hasn't provided adequate training

- 22% of nurses trust AI tools to support safe patient care

- 40% say nurses have no meaningful input into how AI tools are selected

Only 25% of nurses in our survey say they've personally used AI-powered or automated tools as part of their nursing work in the past 30 days. The majority — 68% — say they haven't used AI at work at all, and 7% aren't sure. Whether that reflects institutional choices, limited availability, or personal reluctance, the practical reality is that most nurses are not yet working regularly alongside AI systems.

But "most nurses aren't using AI yet" and "AI is being deployed in healthcare" are both true at the same time. The gap between them is where the problems live.

The Training Gap

Among nurses who have any exposure to AI tools at their workplace — those who answered the training question rather than selecting "not applicable" — only 40% say their employer has provided adequate training. The remaining 60% say it has not.

60% of nurses with AI exposure say their employer has not provided adequate training for the tools they're expected to use.

That 60% figure is striking on its own. But it becomes more concerning when you consider what inadequate training means in a clinical context. A nurse who doesn't fully understand how a documentation AI generates its outputs may not know when it's wrong. A nurse who hasn't been trained on the limitations of a clinical decision support tool may override correct intuition in favor of an algorithm's recommendation — or, conversely, may dismiss a useful alert because they don't trust a system they've never been taught to use.

The Institute for Healthcare Improvement has noted that relying on clinicians alone to double-check the accuracy of AI results is "an unreliable safety strategy," and that deploying AI without adequate safeguards carries real risks for both patients and staff.

One nurse captured the generational dimension of this gap: "No training on AI for intergenerational nurses. No support for intergenerational RNs on computer skills. No plan from government to support RNs — healthcare workers that survived the COVID epidemic."

The training gap is largest where clinical complexity is highest. ER nurses, ICU nurses, and medical-surgical nurses — the specialties dealing with the highest patient acuity — are among the least likely to report adequate preparation for the tools being deployed in their units.

A Trust Problem That Isn't Going Away

When we asked nurses whether they trust AI-powered tools to support safe patient care in their current work environment, only 22% said yes. Nearly 40% said they actively distrust AI for patient care. And another 39% said they were simply unsure.

The "unsure" figure deserves as much attention as the "distrust" figure. Thirty-nine percent of nurses can't say with confidence whether the AI tools in their workplace are safe for patient care. That is not a minor implementation challenge. That is a signal that healthcare institutions are moving faster than the trust — and the evidence base — can support.

Notably, nurses who are actually using AI tools are significantly more trusting of them than those who aren't: 53% of nurses who use AI say they trust it for patient care, compared to just 12% of non-users. That gap could reflect genuine positive experiences with well-designed tools, or it could reflect a form of familiarity bias. Either way, it underscores that exposure and training matter — and that the nurses being deployed on AI tools without either are not going to arrive at trust on their own.

A 2024 survey by National Nurses United found that AI often contradicts and undermines nurses' own clinical judgment, with 69% of nurses saying that when their employer uses algorithmic systems to measure patient acuity, the results don't match their own assessment. The NNU called for stricter regulation and greater nurse input on how AI is deployed. Our data suggests that, a year later, the situation has not improved.

"We are seeing a tremendous loss of critical thinking in nurses," one respondent wrote. "AI and algorithms have increasingly left nurses in a task job as opposed to a career."

Another nurse was more direct: "I have been a nurse for 34 years and it is an ever-changing profession. I do not feel AI can be useful in the profession."

No Seat at the Table

The data point that most clearly explains the trust and training problems is this: 40% of nurses say nurses have no meaningful input into how AI or automation tools are selected and used in their workplace. Another 41% say they're not sure. Only 19% believe nurses have meaningful input into these decisions.

Think about what that means. The people who spend the most time with patients — who will actually use these tools in high-stakes moments, who understand the clinical context in which they're being deployed — are being excluded from the decisions about which tools get purchased, how they're configured, and what workflows they're integrated into.

A 2024 survey by McKinsey and the American Nurses Foundation found that while nearly two-thirds of nurses said they'd like more AI tools available at work, their top concerns were accuracy, a lack of human interaction, and insufficient guidance on using the technology. Nursing leaders noted that "quite often we have these technology companies who do not have nurses on staff, who do not have nurses involved in creating these technologies."

That pattern — technology designed without nurses, then deployed on nurses — runs directly through our data. And it has a predictable result: tools that don't fit clinical reality, training that doesn't happen, and a workforce that is skeptical of systems it had no hand in choosing.

One nurse described the downstream effect in stark terms: "AI has taken over our scheduling and ruined it. Some of our security guards are 60+ years old. Staffing is getting less. More paperwork is being added to the job."

Another offered a pointed structural critique: "Technology companies do not have nurses on staff, who do not have nurses involved in creating these technologies." The observation, which echoes the nursing leadership voices in external research, points to the same root cause: the people with the most at stake are the last to be consulted.

Who Is Using AI — and Who Isn't

AI adoption is not uniform across nursing. Specialty and education level both predict how likely a nurse is to be using AI tools at work.

Nurse educators lead by a wide margin — 55% say they've used AI tools in the past 30 days — followed by administration and leadership roles (49%) and case management (43%). These are roles that involve more administrative work, documentation, and non-bedside functions where AI tools currently have the most practical utility.

At the other end, nurses in long-term care (8%), oncology (10%), and PACU recovery rooms (12%) report the lowest AI use. Bedside-heavy, high-acuity specialties — ICU at 15%, ER at 18%, telemetry at 12% — also fall well below the overall average of 25%.

The education pattern is equally clear. NPs (47%), nursing students (50%), and DNPs (45%) report the highest rates of AI use. RN-ADNs (17%) and LPN/LVNs (16%) report the lowest. Higher education levels track consistently with greater AI exposure, which likely reflects both the nature of the roles these nurses hold and the environments in which they practice.

Age also matters. Nurses under 35 are roughly twice as likely to be using AI at work as nurses 65 and older: 35% of nurses aged 25–29 report AI use, compared to just 16% of nurses 65 and older. The nursing workforce overall skews older — the 2024 National Nursing Workforce Survey found the median age of RNs is now 50, up from 46 in 2022, with nurses 65 and older representing 18.3% of the workforce, the largest single age bracket. That means AI is arriving in a profession where the most experienced segment of the workforce is also the least likely to be using it or receiving training on it.

In Their Own Words

The open comments from nurses on AI touched on three themes consistently: concern about the erosion of clinical judgment, frustration with being excluded from decisions, and a fear that the tools being deployed are serving institutional efficiency rather than patient care.

- "Technology and the EMR have taken nurses away from the bedside. No longer are we focused on our patients, but rather on completing the voluminous check boxes which are supposed to reflect our patients' condition and care. Give me back my pen and paper so I can describe patients' conditions and concerns in my own words. As a nursing supervisor, I see my nurses all at the nurses' station clicking and typing away before even laying eyes on patients."

- "After 45+ years of nursing I feel as though the profession has lost the human aspect of care. We are required to spend more time clicking boxes and meeting algorithms than laying hands on our patients. Sometimes spending 10 minutes listening to a patient voice concerns or fears negates anxiety meds."

- "It isn't for everyone. I find my concern is people are getting into nursing just for the paycheck. Add AI into the mix — if your AI answer is wrong, how will YOU know?"

- "I've been in nursing 40 years. Patient care has changed. More time with AI than with patients."

Not all nurses are opposed. A small but meaningful group sees real potential:

- "Looking forward to AI applications making our jobs easier, and excited for nutrition and functional health advancements."

- "I love the nursing profession — it has been my life for 38 years. I'm not sure about AI in nursing but I'm glad I'm getting out before it's really going. I think it could help with the understaffing problem, but feel the human aspect is still needed."

Among the nurses in our survey who are using AI, 56% say it has reduced the time they spend on documentation and administrative tasks. That benefit is real, and it matters — documentation burden is one of the most consistent drivers of nurse dissatisfaction. But it is reaching only a fraction of the workforce, and it is arriving without the training, trust-building, or nurse input that would make it stick.

What Responsible AI Adoption Looks Like

The nurses in our survey aren't anti-technology. They're anti-technology-done-badly. And the data makes clear what "badly" looks like: tools chosen without nurse input, deployed without adequate training, and measured by institutional efficiency metrics rather than patient care outcomes or clinician experience.

What would the alternative look like? Nurses in our survey and in broader research have been consistent on this point for years. Responsible AI adoption in nursing means involving nurses in procurement and implementation decisions — not as token consultants, but as clinical experts whose day-to-day reality determines whether a tool succeeds or fails. It means adequate training before deployment, not after. It means evaluation frameworks that include nurse experience, not just operational cost savings. And it means preserving clinical judgment as the final word, not ceding it to an algorithm.

National Nurses United has stated that "nurses embrace worker-centric technologies that complement bedside skills and improve quality of care" while opposing technologies that undermine clinical judgment. Their position calls for implementation guided by the precautionary principle: the burden of proof should be on the institution to demonstrate that a tool is safe and effective, not on nurses to prove it isn't.

The tools being deployed today will shape the profession for a generation. Whether they make nursing better — safer for patients, more sustainable for nurses — or whether they become one more thing done to nurses rather than with them, depends on decisions being made right now. And right now, 40% of nurses say they have no seat at the table where those decisions are happening.

That is the problem worth solving.

This article is part of Nurse.org's ongoing coverage of the 2026 State of Nursing Survey.